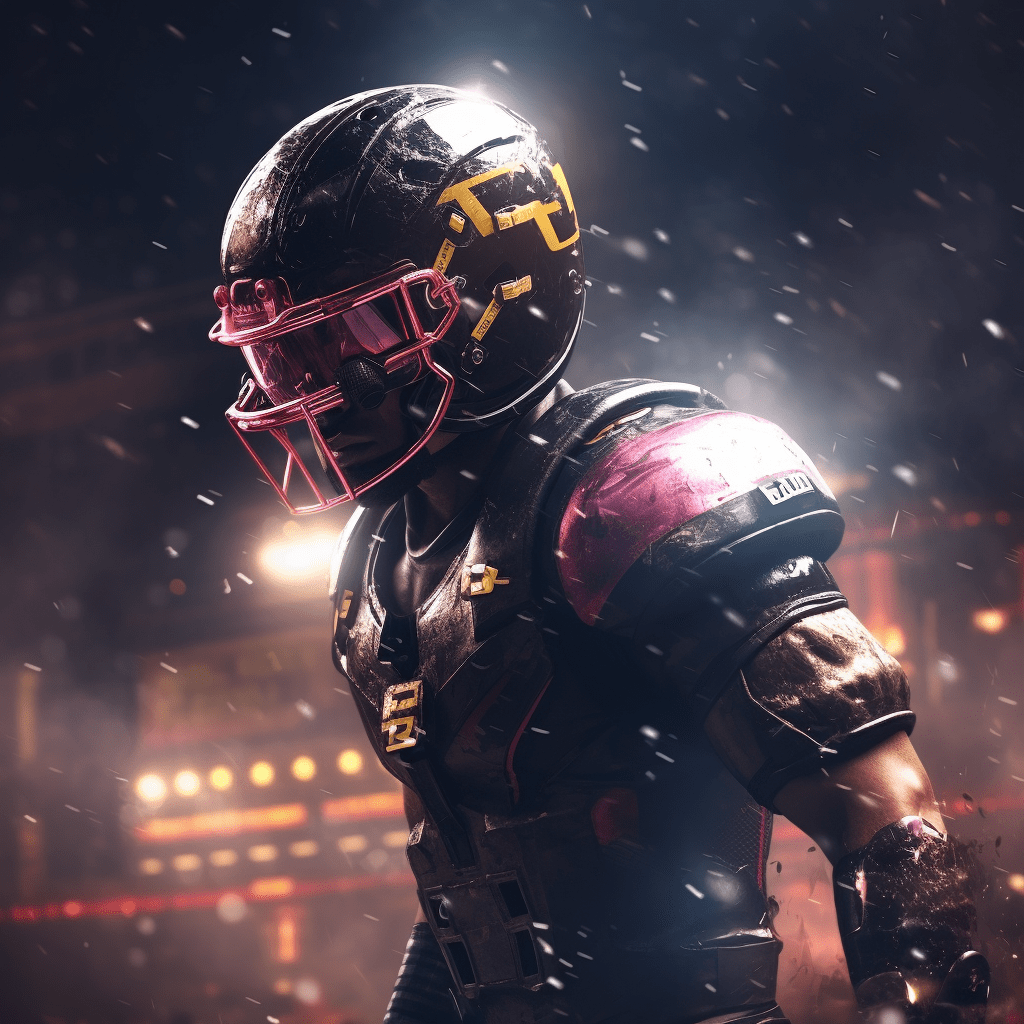

First and Thirty

NFL Betting, done brilliantly.

We handicap every game, every week.

And we do it for free.

Wager Recommendations

Quantitative handicapping

We leverage advanced NFL statistics alongside our in-house power rankings to handicap every matchup. Then we pick the best bets for your money.

Weekly Analysis

Insight on our picks

Knowing why you're betting is as important as knowing what you're betting. We give you full transparency into each one of our picks.

Results

Through the end of the Divisional Round, 2023 Season

174

Wins

155

Losses

7

Pushes

1.0%

ROI

-9.3%

ROI Lower Limit

11.3%

ROI Upper Limit

Super Bowl LVIII Forecasts

Sunday, Feb 11, 6:30 PM (CBS) at Allegiant Stadium in Las Vegas, Nevada

The model prediction for SB LVIII is roughly a pick'em with a slight bias towards dog odds on KC. We do not recommend taking either side of the wager due to house vigorish, but if you really must, take KC.

Stay tuned for props and fun SB wagers in the Analysis section below.

Analysis

February 9, 2024Brady,Super Bowl LVIII,ProThe bets are determined by checking a wide variety of projections against my own knowledge of how players will be utilized given game context. The player prop lines were taken from this site (or were manually hunted). When analyzing projections from fantasy sites, you'll often find that the...Champ Round 2023,Recap,BradyWelcome to the recap blog. Weekly results are a silly thing to track -- the small sample and high variance nature of the NFL makes them totally irrelevant. However, it's always fun looking back at how the games went, and I like to see where we had closing line value. Curious how we did in...January 27, 2024Brady,Prop Bets,Champ Round 2023The bets are determined by checking a wide variety of projections against my own knowledge of how players will be utilized given game context. The player prop lines were taken from this site. When analyzing projections from fantasy sites, you'll often find that the projections are higher than...January 27, 2024Brady,Recommended Wager,Champ Round 2023Detroit (+7.0) @ San Francisco Power rankings: Detroit 7, San Fran 2 When Detroit has the ball: The Lions offense is 5th in DVOA (7th pass, 4th run) and 8th in EPA/play. They feature one of the best offensive lines in football, although there are a few injuries to make note of. C Frank Ragnow -...January 26, 2024Brady,Division Round 2023,RecapWelcome to the recap blog. Weekly results are a silly thing to track -- the small sample and high variance nature of the NFL makes them totally irrelevant. However, it's always fun looking back at how the games went, and I like to see where we had closing line value. Curious how we did in...More PostsMore from First and Thirty

Take a deep dive into our process.

DomModel

Our model's prediction for every game, every week.

@FirstAndThirty

Contributors

These are the people who do the things

Dom

Co-founder

Numbers nerd, spreadsheet humper, named the model after himself, modest

Brady

Co-founder

NFL news geek, power ranker, blog writer, caption writer